Understanding Scholarship: The Evidence Hierarchy

Reassessing the Value of Different Types of Evidence

Note: This essay was prepared with the research assistance and ghostwriting of ChatGPT 4.0. No LLMAI were harmed in the process, although I felt inclined to threaten them from time to time.

Author's Preface

A few years back I prepared a bibliography of papers on random controlled trials (RCT) and the evidence hierarchy, which is pretty extensive. I have 128 references I think. I read some in detail and skimmed a lot more. I used Zotero for record keeping, free software, which is excellent for recording bibliographic information.

I have not stopped thinking about that issue. I encounter the claim from time to time that the only research standard worth worrying about is the gold standard of RCTs. I've always thought that's probably not correct according to what I've read, articles written by very respectable scholars. In my view, that opinion on RCTs is all nonsense. There are all kinds of exceptions.

I did study research methods and statistics in university. That was a long time ago, the 1970s. I learned methods of research of experimental psychology: statistics and research designs. I went to graduate school.

I don't remember the term random controlled trials being bandied about but we certainly learned the principles. Maybe the term was just one I have forgotten. We certainly learned the principles.

In any case as part of my “Understanding Scholarship” series, I thought I'd address random controlled trials and the evidence hierarchy. I also felt the need to rant about the claim by skeptics: “data are not the plural of anecdote”. I know that's an inversion of the original claim which is “data are the plural of anecdote”.

Introduction

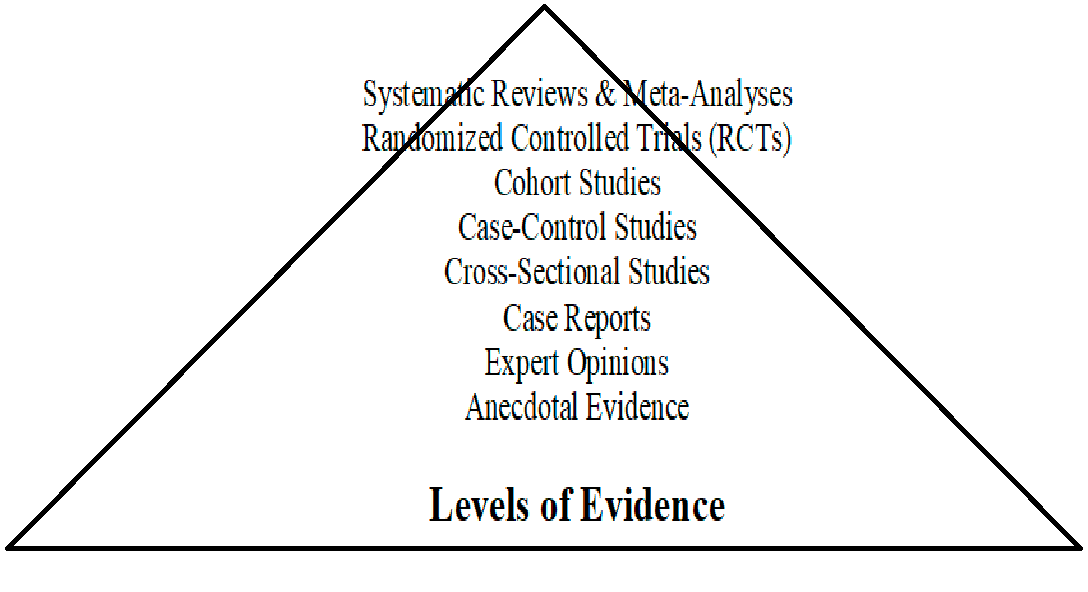

The evidence hierarchy is a key framework in research, ranking various types of evidence based on their reliability and ability to demonstrate causality. It has been most widely used in fields such as medicine, psychology, and public health. However, the rigid application of the hierarchy is increasingly questioned in various disciplines, as its relevance may vary based on the nature of the field being studied. This essay explores the structure of the evidence hierarchy, its applicability, and its limitations across different academic domains. Additionally, it will cover the methodology behind research studies, including the purpose of determining causality, the handling of confounding factors, and the limitations posed by small effect sizes and large variability. The discussion will also highlight how the phrase "data are the plural of anecdote," has been distorted by pseudo-skeptics, reversing the original meaning.

What is the Evidence Hierarchy?

Overview of the Evidence Hierarchy (with Criticisms Considered)

The evidence hierarchy is commonly used to rank research methods based on their perceived reliability and ability to establish causality. However, it has been criticized for being overly rigid and for not adequately reflecting the complexity of real-world research contexts. Below is a more nuanced description of each level, incorporating these criticisms.

1. Systematic Reviews & Meta-Analyses

Description: These synthesize data from multiple studies, aiming to provide comprehensive conclusions. However, they are dependent on the quality and consistency of the underlying studies, which may vary. Systematic reviews can also be prone to publication bias, where only positive results are published.

Example: A review combining data from several drug trials to assess overall effectiveness.

2. Randomized Controlled Trials (RCTs)

Description: RCTs are valued for their ability to reduce bias by randomly assigning participants to groups. However, they can be expensive, time-consuming, and not always ethically feasible. Additionally, real-world complexity can limit their external validity, meaning the findings may not apply to broader populations.

Example: A drug trial with participants randomly assigned to receive either the treatment or a placebo.

3. Cohort Studies

Description: These observational studies track groups over time, offering insights into long-term effects. They are useful when RCTs are not feasible but are more vulnerable to confounding variables, which can distort results.

Example: A study following smokers and non-smokers over 20 years to observe lung health outcomes.

4. Case-Control Studies

Description: These studies compare individuals with and without a condition to identify potential causes. They are useful for studying rare diseases but are limited by their retrospective nature and the risk of recall bias.

Example: A study comparing cancer patients to non-patients and analyzing their history of exposure to certain chemicals.

5. Cross-Sectional Studies

Description: These offer a snapshot of a population at a specific time, useful for identifying associations but not for establishing causality. They are efficient but limited in their ability to account for the complexities of time or change.

Example: A survey measuring the prevalence of diabetes in a population.

6. Case Reports

Description: Case reports provide detailed descriptions of individual or small group cases, often highlighting rare or novel phenomena. They are useful for generating hypotheses but are not generalizable and lack rigorous controls.

Example: A physician’s report on a rare drug side effect observed in one patient.

7. Expert Opinions

Description: Expert opinions are based on professionals’ experience and knowledge. While valuable in contexts lacking empirical data, they are highly subjective and vary based on the expert’s background and biases.

Example: A clinician’s guidance on best practices for treating a rare disease.

8. Anecdotal Evidence

Description: Anecdotal evidence is based on individual experiences or personal accounts. While it can provide initial insights, it is highly subjective and unverified, making it the least reliable form of evidence.

Example: A patient’s account of how a specific diet improved their symptoms.

---

Adjustments Based on Criticisms

Each of these levels offers varying degrees of reliability, but the usefulness of the evidence hierarchy depends on the context in which it is applied. It is important to remain critical of its limitations and to integrate other methods when necessary, recognizing that no single approach can provide all the answers in complex real-world scenarios.

The evidence hierarchy categorizes research methods from most to least reliable, with the aim of offering a clear ranking of study designs based on their capacity to establish causality and minimize bias. At the top of the hierarchy, systematic reviews and meta-analyses combine data from multiple studies to provide a high level of reliability, while randomized controlled trials (RCTs) rank just below, being the most trusted individual study design. Lower on the hierarchy are cohort studies, case-control studies, cross-sectional studies, and case reports. At the bottom lie expert opinions and anecdotal evidence, which provide weaker forms of evidence that lack empirical rigor (Freemantle et al., 2003; Greenhalgh, 2014; Sackett et al., 1996).

Applicability or Inapplicability of the Evidence Hierarchy Across Scholarly Disciplines

The evidence hierarchy is particularly useful in fields where experimental control is feasible, such as medicine and public health (Guyatt et al., 1995; Sackett et al., 1996). However, its strict application is less relevant in disciplines like archaeology, history, and education, where observational methods or qualitative data are often the primary sources of evidence (Cartwright & Deaton, 2018). Critics argue that applying a rigid hierarchy can lead to the undervaluation of valuable research, particularly in areas that rely on narrative-based methods or contextual understanding (Greenhalgh, 2014).

The Evidence Hierarchy Levels: Pros and Cons

1. Systematic Reviews & Meta-Analyses

Pros:

Aggregates multiple studies, minimizing biases (Guyatt et al., 1995).

Offers broad generalizability across populations (Freemantle et al., 2003).

Can resolve conflicting findings from individual studies (Greenhalgh, 2014).

Efficiently synthesizes a large body of research (Allen et al., 2015).

Highlights gaps in existing research (Ioannidis, 2005).

Cons:

Dependent on the quality of the included studies (Ioannidis, 2005).

Prone to publication bias (Sackett et al., 1996).

Mixing studies with different methodologies can produce misleading results (Frieden, 2017).

Conducting systematic reviews can be resource-intensive (Cartwright & Deaton, 2018).

In some fields, a lack of high-quality studies makes meta-analysis difficult (Freemantle et al., 2003).

2. Randomized Controlled Trials (RCTs)

Pros:

Strong evidence for causality through randomization (Guyatt et al., 1995).

Minimizes confounding variables (Freemantle et al., 2003).

Provides reproducible results (Akobeng, 2005).

Blinding reduces the risk of bias (Sackett et al., 1996).

Allows precise control over experimental conditions (Greenhalgh, 2014).

Cons:

May lack external validity due to artificial settings (Bhatt & Mehta, 2016).

Expensive and time-consuming to conduct (Bondemark & Ruf, 2015).

Ethical challenges in withholding treatment (Cartwright & Deaton, 2018).

Industry sponsorship can introduce bias (Ioannidis, 2005).

Limited in representing diverse populations (Sørensen, 2006).

3. Cohort Studies

Pros:

Captures long-term outcomes (Euser et al., 2009).

Reflects real-world conditions better than RCTs (Cook & Thigpen, 2019).

Can study multiple outcomes from a single exposure (Frieden, 2017).

No ethical concerns over withholding treatment (Allen et al., 2015).

Suitable for studying rare exposures (Bhatt & Mehta, 2016).

Cons:

Susceptible to confounding factors (Freemantle et al., 2003).

Loss to follow-up can skew results (Euser et al., 2009).

Expensive due to long timeframes (Guyatt et al., 1995).

Selection bias may affect representativeness (Cook & Thigpen, 2019).

Misclassification errors may occur (Ioannidis, 2005).

4. Case-Control Studies

Pros:

Efficient for studying rare diseases (Aronson & Hauben, 2006).

Less resource-intensive than cohort studies (Akobeng, 2005).

Quick to conduct retrospectively (Freemantle et al., 2003).

Useful for identifying risk factors (Euser et al., 2009).

No need for randomization (Horn & DeJong, 2005).

Cons:

Recall bias affects accuracy (Ioannidis, 2005).

Cannot establish causality (Cleophas & Zwinderman, 2000).

Prone to selection bias (Freemantle et al., 2003).

Difficulties in matching controls (Guyatt et al., 1995).

Confounding factors are hard to control (Horn & DeJong, 2005).

5. Cross-Sectional Studies

Pros:

Quick and cost-effective (Freemantle et al., 2003).

Useful for estimating prevalence (Guyatt et al., 1995).

Generates hypotheses for further research (Aronson & Hauben, 2006).

Large sample sizes increase statistical power (Allen et al., 2015).

Provides a snapshot of a population at a given time (Greenhalgh, 2014).

Cons:

Cannot determine causality (Sackett et al., 1996).

Susceptible to recall bias (Frieden, 2017).

Only captures data at one point in time (Guyatt et al., 2011).

Misleading correlations may arise (Ioannidis, 2005).

Confounding variables are not easily controlled (Sackett et al., 1996).

The Purpose of Research Studies: Determining Causality

The ultimate goal of any well-designed research study is to determine causality—that is, to understand whether an intervention or exposure leads to an outcome (Sackett et al., 1996). This is why methodologies like RCTs, with their control over variables, are favored. However, observational studies like cohort and case-control designs also contribute by offering insights into real-world effects and long-term outcomes (Cartwright & Deaton, 2018).

The Role of Confounding Factors and Controlling for Their Effects

Controlling for confounding factors is critical in establishing valid research findings. Confounding occurs when an extraneous variable affects both the independent and dependent variables, skewing the results (Ioannidis, 2005). RCTs, with their random assignment, are designed to minimize the effects of confounders, while observational studies must rely on statistical adjustments (Freemantle et al., 2003).

The Use of Inferential Statistics to Generalize from a Sample to a Population

Research studies sample populations and use inferential statistics to make predictions about larger populations. However, generalizing results requires careful attention to variability and sample representativeness (Guyatt et al., 1995). In medical trials, for example, statistical significance helps to determine whether findings are likely to hold across broader groups, even though individual responses may vary greatly (Greenhalgh, 2014).

The Problem with Small Effect Sizes and Large Variability

One significant challenge in research is dealing with small effect sizes and large variability. Small effects can be difficult to detect, especially when variability is high among participants. High variability dilutes the signal of an intervention, making it harder to distinguish the true impact from noise (Ioannidis, 2005). This problem is particularly acute in fields like medicine and psychology, where human variability can obscure findings, even in well-designed RCTs (Guyatt et al., 1995; Bhatt & Mehta, 2016).

How Averages Tell Us Little About Individuals

While research studies provide average outcomes for a population, these averages may tell us very little about individual responses. Each person has unique biological, environmental, and psychological factors that influence how they respond to treatments or exposures (Greenhalgh, 2014). As a result, treatments that appear effective on average may be less effective or even harmful for specific individuals (Mulder et al., 2018). This highlights the limitations of population-level generalizations, especially in fields like medicine and education, where individual variability plays a large role (Braun, 2019).

The Distortion of "data is the plural of anecdote"

The phrase "data is the plural of anecdote" has been distorted by pseudo-skeptics to the phrase “data is not the plural of anecdote” in order to dismiss anecdotal evidence entirely. Originally, the phrase was used to remind researchers that anecdotes can sometimes point to meaningful trends and that collected data often emerge from individual anecdotes (Aronson & Hauben, 2006). Pseudo-skeptics, however, have flipped this meaning, using the phrase to imply that individual experiences are always irrelevant in scientific discourse (Cartwright & Deaton, 2018).

The Original Phrase: "Data is the Plural of Anecdote"

Contrary to its modern distortion, the original phrase was intended to highlight that data are, in fact, a collection of anecdotes, at least metaphorically. Each data point represents an individual instance, which, when aggregated, contributes to a broader understanding (Aronson & Hauben, 2006). The notion that anecdotal experiences can lead to valuable insights, especially when properly documented, remains relevant in areas like clinical practice and public health (Frieden, 2017).

Summary

The evidence hierarchy remains a useful framework for evaluating research, particularly in fields that rely on experimental control, such as medicine and public health. However, its rigid application can be problematic in disciplines that emphasize qualitative data or contextual factors. Research methods like RCTs are valuable for determining causality, but they are not without limitations, particularly when dealing with small effect sizes, high variability, and the complexities of real-world populations. Additionally, the distortion of phrases like "data is not the plural of anecdote" demonstrates how the misuse of concepts can undermine meaningful discourse. Ultimately, a balanced approach to evidence that recognizes both the strengths and limitations of each level in the hierarchy is essential for advancing knowledge across diverse fields.

References

Akobeng, A. K. (2005). Understanding Randomised Controlled Trials. Archives of Disease in Childhood, 90(8), 840–844. https://doi.org/10.1136/adc.2004.058222

This article provides a foundational introduction to the design, purpose, and strengths of randomized controlled trials (RCTs), aimed at helping clinicians and researchers understand how RCTs contribute to medical evidence. It details key concepts such as randomization, blinding, and the control of confounding variables, and is often cited as a teaching resource in clinical settings.

Allen, R. W., et al. (2015). Randomized Controlled Trials in Environmental Health Research: Unethical or Underutilized? PLoS Medicine, 12(1), e1001775. https://doi.org/10.1371/journal.pmed.1001775

This paper critically assesses the ethical and practical challenges in conducting RCTs in environmental health research, where withholding interventions may pose ethical concerns. The authors argue that while RCTs are considered the gold standard in many areas of medicine, they are underutilized in environmental research due to these constraints, leading to missed opportunities for rigorous evidence generation.

Althouse, A. D., et al. (2018). Response to “Why All Randomized Controlled Trials Produce Biased Results.” Annals of Medicine, 50(7), 545–548. https://doi.org/10.1080/07853890.2018.1514529

This article offers a defense of RCTs in the face of critiques about their inherent biases. Althouse and colleagues acknowledge that while RCTs can introduce certain types of bias, they remain one of the best methods for determining causal relationships in clinical research. The authors provide a balanced view, discussing both the strengths and potential pitfalls of the RCT design.

Aronson, J. K., & Hauben, M. (2006). Anecdotes That Provide Definitive Evidence. BMJ, 333(7581), 1267–1269. https://doi.org/10.1136/bmj.39036.666389.94

This paper explores the often-dismissed role of anecdotal evidence in scientific research, arguing that certain significant anecdotes can lead to definitive evidence in specific contexts. The authors discuss how rare but important individual cases have contributed to major scientific breakthroughs, calling for a more nuanced understanding of how anecdotal evidence can complement traditional data-driven methods.

Bhatt, D. L., & Mehta, C. (2016). Adaptive Designs for Clinical Trials. New England Journal of Medicine, 375(1), 65–74. https://doi.org/10.1056/NEJMra1510061

Bhatt and Mehta examine the use of adaptive designs in clinical trials, where modifications can be made to the trial protocol based on interim results. These designs offer increased flexibility, allowing for more efficient and ethical trials. The paper discusses various types of adaptive designs, including those that adjust dosing or sample size, and how they can improve trial outcomes.

Bhide, A., et al. (2018). A Simplified Guide to Randomized Controlled Trials. Acta Obstetricia et Gynecologica Scandinavica, 97(4), 380–387. https://doi.org/10.1111/aogs.13309

This guide is aimed at healthcare professionals and researchers looking to understand the basic principles of RCTs. It breaks down complex concepts such as randomization, blinding, and the use of control groups into easily understandable terms. The paper also discusses the importance of RCTs in clinical decision-making and offers practical advice for designing and conducting these trials.

Bondemark, L., & Ruf, S. (2015). Randomized Controlled Trial: The Gold Standard or an Unobtainable Fallacy? European Journal of Orthodontics, 37(5), 457–461. https://doi.org/10.1093/ejo/cjv046

Bondemark and Ruf challenge the notion that RCTs should always be considered the gold standard in clinical research, particularly in specialized fields like orthodontics. They highlight the ethical, logistical, and financial challenges that make RCTs impractical in certain contexts, and argue for the use of alternative research designs that still provide robust evidence without the need for strict randomization.

Braun, H. (2019). The Cognitive Outcomes of Liberal Education: A Longitudinal Study. The Andrew W. Mellon Foundation. https://mellon.org/news-blog/articles/cognitive-outcomes-liberal-education/

Braun's longitudinal study examines the cognitive benefits of a liberal education over time, contrasting it with more technical or specialized forms of education. The study's findings suggest that a broad-based education enhances critical thinking, problem-solving, and adaptability. The report argues for a more holistic approach to higher education, where cognitive outcomes go beyond immediate job preparation.

Cartwright, N., & Deaton, A. (2018). Understanding and Misunderstanding Randomized Controlled Trials. Social Science & Medicine, 210, 2–21. https://doi.org/10.1016/j.socscimed.2017.12.005

In this influential paper, Cartwright and Deaton critique the overreliance on RCTs in public health and economics, arguing that RCTs are often misapplied. While RCTs are useful for demonstrating causality under controlled conditions, they are less effective at answering broader, context-dependent questions. The authors suggest that a more nuanced approach is needed, incorporating a variety of research methods to generate useful insights.

Cleophas, T. J., & Zwinderman, A. H. (2000). Limitations of Randomized Clinical Trials: Proposed Alternative Designs. Clinical Chemistry and Laboratory Medicine (CCLM), 38(12), 1217–1223. https://doi.org/10.1515/CCLM.2000.192

This paper discusses the limitations of RCTs, particularly in clinical settings where patient variability can lead to skewed results. Cleophas and Zwinderman propose alternative research designs that still control for bias but are more flexible than traditional RCTs. They argue that these designs can be particularly useful in fields like pharmacology and personalized medicine, where strict randomization may not always be feasible.

Concato, J., Shah, N., & Horwitz, R. I. (2000). Randomized, Controlled Trials, Observational Studies, and the Hierarchy of Research Designs. New England Journal of Medicine, 342(25), 1887–1892. https://doi.org/10.1056/NEJM200006223422507

Concato and colleagues challenge the notion that RCTs should always be at the top of the evidence hierarchy. They argue that observational studies can provide valuable insights that complement RCTs, especially in fields where randomized trials are impractical or unethical. The paper advocates for a more flexible approach to evidence generation, taking into account the strengths of various research designs.

Cook, C. E., & Thigpen, C. A. (2019). Five Good Reasons to Be Disappointed with Randomized Trials. Journal of Manual & Manipulative Therapy, 27(2), 63–65. https://doi.org/10.1080/10669817.2019.1589697

Cook and Thigpen outline five key reasons why RCTs may not always live up to their reputation as the gold standard in research. These include the challenges of generalizing findings from highly controlled environments to real-world settings, as well as issues related to sample sizes and ethical concerns. They argue that while RCTs remain valuable, they are not without significant limitations.

Deaton, A., & Cartwright, N. (2018). Understanding and Misunderstanding Randomized Controlled Trials. Social Science & Medicine, 210, 2–21. https://doi.org/10.1016/j.socscimed.2017.12.005

This article critiques the over-reliance on RCTs in fields like economics and public health, where the conditions necessary for randomization often do not exist. Deaton and Cartwright argue that while RCTs are valuable in certain contexts, they are often misapplied in complex, real-world situations. They propose a more context-sensitive approach to research design that takes into account the limitations of randomization.

Euser, A. M., Zoccali, C., Jager, K. J., & Dekker, F. W. (2009). Cohort Studies: Prospective Versus Retrospective. Nephron Clinical Practice, 113(3), c214–c217. https://doi.org/10.1159/000235241

Euser and colleagues compare prospective and retrospective cohort studies, highlighting the advantages and limitations of each approach. While prospective studies are more reliable in terms of causality, retrospective studies can be more practical in terms of time and resources. This paper is frequently cited in discussions about study design in medical research, particularly in nephrology and other long-term health studies.

Freemantle, N., et al. (2003). Composite Outcomes in Randomized Trials: Greater Precision but with Greater Uncertainty? JAMA, 289(19), 2554–2559. https://doi.org/10.1001/jama.289.19.2554

This article examines the use of composite outcomes in RCTs, where multipleFreemantle, N., et al. (2003). Composite Outcomes in Randomized Trials: Greater Precision but with Greater Uncertainty? JAMA, 289(19), 2554–2559. https://doi.org/10.1001/jama.289.19.2554

This article critically examines the use of composite outcomes in RCTs, which increase statistical power but often introduce interpretive challenges due to the combination of multiple endpoints. Freemantle and colleagues discuss the trade-offs between precision and uncertainty when relying on composite outcomes, emphasizing the need for careful interpretation to avoid misleading conclusions in clinical trials.

Frieden, T. R. (2017). Evidence for Health Decision Making—Beyond Randomized Controlled Trials. New England Journal of Medicine, 377(5), 465–475. https://doi.org/10.1056/NEJMra1614394

Frieden argues that while RCTs are important for understanding causality, they are not always the best tool for guiding public health decisions. This article discusses how other forms of evidence, such as observational studies and real-world data, are essential for making informed decisions in public health. Frieden emphasizes the need for a comprehensive approach to evidence, especially in complex health interventions.

Greenhalgh, T. (2014). Narrative Based Medicine: Dialogue and Discourse in Clinical Practice. BMJ Books. https://doi.org/10.1136/bmj.312.7023.71

Greenhalgh advocates for the integration of narrative-based approaches with evidence-based medicine, highlighting that RCTs and systematic reviews may not fully capture the complexity of individual patient needs. The book focuses on the role of patient narratives in medical decision-making, arguing that storytelling is a crucial complement to quantitative data, particularly in fields where individualized care is paramount.

Guyatt, G. H., et al. (1995). Users’ Guides to the Medical Literature: II. How to Use an Article About Therapy or Prevention. JAMA, 274(1), 59–63. https://doi.org/10.1001/jama.274.1.59

This guide remains a seminal resource for clinicians looking to interpret research studies, particularly RCTs. Guyatt and colleagues offer a step-by-step approach to evaluating the validity and applicability of studies on therapy or prevention. The paper is a cornerstone of evidence-based medicine, providing a practical framework for translating research findings into clinical practice.

Guyatt, G. H., et al. (2011). GRADE Guidelines: 4. Rating the Quality of Evidence—Study Limitations (Risk of Bias). Journal of Clinical Epidemiology, 64(4), 407–415. https://doi.org/10.1016/j.jclinepi.2010.07.017

This paper is part of the GRADE guidelines, a systematic framework for assessing the quality of evidence in research. It highlights the limitations of RCTs, particularly concerning the risk of bias, and emphasizes the importance of considering study limitations across various types of evidence. The GRADE approach is widely used in developing clinical practice guidelines.

Horn, S. D., & DeJong, G. (2005). Rehabilitation Research and Randomized Controlled Trials: Finding the Balance. Archives of Physical Medicine and Rehabilitation, 86(12), 6-7. https://doi.org/10.1016/j.apmr.2005.08.115

This commentary explores the tension between using RCTs and other research methodologies in rehabilitation research. Horn and DeJong argue that while RCTs are valuable for determining causality, observational studies may offer better insights into real-world rehabilitation settings, where patient variability and complex interventions complicate the rigid designs of traditional RCTs.

Horn, S. D., DeJong, G., et al. (2005). Another Look at Observational Studies in Rehabilitation Research: Going Beyond the Holy Grail of the Randomized Controlled Trial. Archives of Physical Medicine and Rehabilitation, 86(12), 8–15. https://doi.org/10.1016/j.apmr.2005.08.116

This paper discusses the limitations of RCTs in rehabilitation research, where patient variability and complex treatments often challenge the validity of randomized designs. Horn and colleagues advocate for the inclusion of observational studies to capture more relevant data in clinical settings. The article emphasizes the importance of context in research design, particularly in fields focused on individualized patient care.

Ioannidis, J. P. (2005). Why Most Published Research Findings Are False. PLoS Medicine, 2(8), e124. https://doi.org/10.1371/journal.pmed.0020124

Ioannidis' groundbreaking article argues that a significant proportion of published research findings may be false, particularly those based on small sample sizes or poorly designed studies. The paper has been highly influential in discussions about the reproducibility crisis in science, highlighting issues such as bias, conflicts of interest, and the misuse of statistical methods in producing unreliable research findings.

Mulder, R., Singh, A. B., & Hamilton, A. (2018). The Limitations of Using Randomized Controlled Trials as a Basis for Developing Treatment Guidelines. Evidence-Based Mental Health, 21(1), 4–6. https://doi.org/10.1136/eb-2017-102701

Mulder and colleagues critique the over-reliance on RCTs for developing clinical guidelines, especially in mental health, where the complexity of psychiatric disorders often cannot be captured through RCTs alone. The paper suggests that RCTs should be complemented with other research designs to develop more comprehensive and individualized treatment guidelines in mental health care.

Sansoni-Fisher, R. W., Bonevski, B., Green, L. W., & D'Este, C. (2007). Limitations of the Randomized Controlled Trial in Evaluating Population-Based Health Interventions. American Journal of Preventive Medicine, 33(2), 155–161. https://doi.org/10.1016/j.amepre.2007.04.007

This paper critiques the application of RCTs to population-based health interventions, arguing that the complexity and multifactorial nature of public health initiatives are not always captured by traditional RCT designs. The authors advocate for the use of alternative methods, such as observational studies and real-world evidence, to evaluate the effectiveness of large-scale interventions.

Sackett, D. L., et al. (1996). Evidence-Based Medicine: What It Is and What It Isn’t. BMJ, 312(7023), 71-72. https://doi.org/10.1136/bmj.312.7023.71

Sackett and colleagues introduced the concept of evidence-based medicine (EBM) in this influential article. They argue that while RCTs and systematic reviews are crucial components of EBM, clinical expertise and patient preferences should also play a significant role in decision-making. The paper has been a touchstone in the development of EBM as a guiding framework for clinical practice.

Sørensen, H. T. (2006). Beyond Randomized Controlled Trials: A Critical Comparison of Trials with Nonrandomized Studies. Hepatology. https://doi.org/10.1002/hep.21404

Sørensen compares RCTs and nonrandomized studies in hepatology research, discussing when nonrandomized studies may provide more practical or ethical insights than RCTs. The paper highlights the limitations of RCTs in addressing complex, multifactorial diseases like liver disease, where patient-specific factors often necessitate more flexible research designs.

Saturni, S., Bellini, F., Braido, F., et al. (2014). Randomized Controlled Trials and Real-Life Studies. Approaches and Methodologies: A Clinical Point of View. Pulmonary Pharmacology & Therapeutics, 27(2), 129–138. https://doi.org/10.1016/j.pupt.2014.01.005

This paper contrasts the strengths of RCTs with real-life studies in clinical research. The authors argue that while RCTs offer high internal validity, their narrow inclusion criteria can limit generalizability. Real-life studies, although lower in the hierarchy of evidence, may provide more relevant insights for clinical practice, particularly in fields like pulmonology, where patient variability is high.

You might like

https://geoffpain.substack.com/p/fluoride-ntp-report-is-fluff